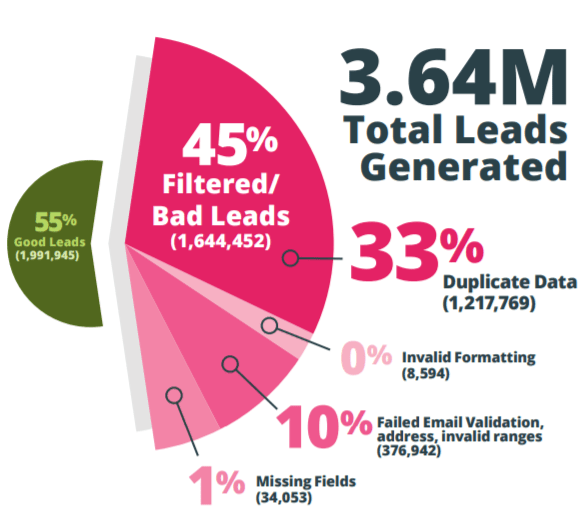

Have you ever given a thought as to how tremendously duplicate data impairs the productivity and efficiency of your business processes and the overall negative implications it has on your company? As more and more companies have been rampantly exploring Big Data in order to offer better customer experiences across various channels, they have been facing several challenges related to duplicate data. Research says that a minimum of 15% of the lead database consists of duplicate records and that businesses lose around 20% of revenue due to bad data quality. Duplicate records, multiple instances of a single lead, redundant data; no matter what you call them, they are your biggest nemesis!  Customer data is the backbone of most businesses these days and duplicate data is one of the most agonizing issues that can afflict your company’s contact database. Presently, there are innumerable alternatives for acquiring, cultivating and verifying leads. A lead record ideally gets created through one source and then gets validated and enriched by several sources over time. As a consequence of each refinement effort, most marketers often keep both the old and new data prior to the consolidation and return to the old data if required. However, after a course of time, when work schedules get swamped, and this database becomes even more colossal, the process of validation and consolidation of records gets even more difficult to manage and is mostly delayed. Consequently, these duplicate records keep accumulating, resulting in multiple instances of a single record at various locations and become very difficult to track.

Customer data is the backbone of most businesses these days and duplicate data is one of the most agonizing issues that can afflict your company’s contact database. Presently, there are innumerable alternatives for acquiring, cultivating and verifying leads. A lead record ideally gets created through one source and then gets validated and enriched by several sources over time. As a consequence of each refinement effort, most marketers often keep both the old and new data prior to the consolidation and return to the old data if required. However, after a course of time, when work schedules get swamped, and this database becomes even more colossal, the process of validation and consolidation of records gets even more difficult to manage and is mostly delayed. Consequently, these duplicate records keep accumulating, resulting in multiple instances of a single record at various locations and become very difficult to track.

There are a number of ways in which this database adversely affects a company. Let us look at some of the negative implications of this database from a business perspective.

- Wasted marketing effort: A database with significant duplicate records results in wasted efforts in a lot of marketing activities. Sending out the same email repeatedly to a lead might result in poor customer experience and the person might eventually unsubscribe from your email. Also, the email service providers push these unopened emails to the spam folder. The additional cost of repeatedly sending the same email to a single person gets massive when considered on a large scale. In case a company markets through a direct mail service, the cost of sending multiple catalogs, money wasted on duplicate print, and the postage costs are massive. There is also a negative impact on the response rates and the overall ROI of the marketing activities. Moreover, segmentation of the prospects becomes very difficult when an accurate view is lacking which can result in problems related to targeting the customer through appropriate marketing campaigns.

- Poor brand perception: The company reputation gets drastically affected if a single prospect is repeatedly sent the same mail or called repeatedly for the same purpose. This looks very unprofessional and will probably lead to wary prospects and customers.

- Unnecessary data storage: A significant underlying issue with a database having duplicate records is the unnecessary data storage required and the cost involved.

- Missed opportunities: Bad data quality linked to duplicate or inaccurate records can result in the sales team missing out on various sales opportunities. Following multiple instances of a single lead might hinder the representatives form focusing on nurturing better opportunities or looking for new ones.

- Inefficient customer service: Customer service becomes less efficient because of duplicate data problems. Duplicate or inaccurate data drastically impacts almost every touchpoint in the customer journey. From the customer service perspective, the team might find it difficult to map the customer with an accurate record when multiple records are present. This can result in delayed response time and lower resolution rates which can sever customer relationship and be a setback to the team as well

- Poor customer experience: With multiple records for a single customer, it is very difficult to create a single view of the customers and their behaviors. Poor customer service is bound to result in customer dissatisfaction as the customer might face delayed resolution rates or, in the worst case, no response at all! It is also to be noted that having a good quality database can help provide more personalized interactions, which is really needed for converting leads and nurturing them in the long run.

- Over projection: A significant number of duplicates in the database can cause the marketing and sales departments to over-project! It can be explained using simple logic. Let us assume that you have around 1000 leads in your database and your team projects that they will be able to convert around 30% of them, which boils down to 300, after carrying out well-planned marketing campaigns. In case the database has around 15% duplicate records, you are just left with 850 unique records. If about 20% of them actually buy, you will be having around 170 buyers which are almost half of your projection. And that is probably a best-case scenario!

- Productivity issues: Having a database consisting of a significant amount of duplicate data can lead to low productivity. In case the sales representatives do identify that they are facing duplicate data problems and try handling these problems themselves, it leads to a significant loss in productivity. The representatives start rechecking the information in the database before contacting a prospect. This results in significant wastage and also frustrates the sales representatives over the course of time. Even if these reps try getting rid of duplicate data using complicated excel formulas, which might solve only a part of their problems, they stand at a risk of missing duplicate records when the database is too large or deleting user data in the process.

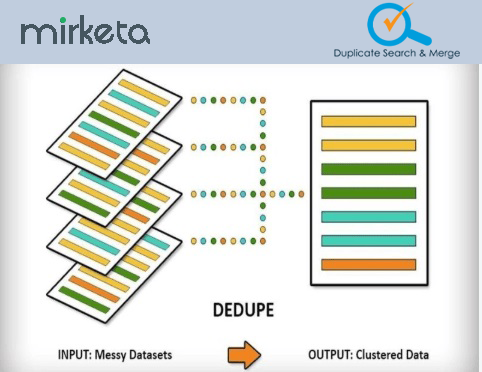

Having highlighted the major ways in which duplicate data decreases the efficiency in various business processes, we recommend you to check out DSM for Salesforce. Offered by Mirketa, Duplicate Search and Merge is a pretty handy deduplication tool to eliminate duplicate data and incomplete records. It resolves all the data duplicity issues in a very easy configurable manner using out-of-box salesforce functionalities. Since it inherently runs natively on Salesforce, data does not leave the Salesforce org. This ensures data security as there is no data transfer at any point.

There are a number of capabilities that make DSM better than all almost all other salesforce deduplication apps:

- Powerful bulk Search & Merge via optimized design.

- Exact search based on multiple criteria.

- Search based on both standard and custom field criteria.

- Deduplication on standard as well as on custom objects.

- Choose the master records manually or leave it to the application to do that for you automatically.

- Export the searched duplicates.

- Re-parenting of child records while merging the Parent records.

- Smart Reporting via an intuitive dashboard.

- Save your searches for future use.

- Job Scheduler.

- Native Salesforce.com deduplication solution.

- Intelligent notification alerts on successful merge with the master record details.

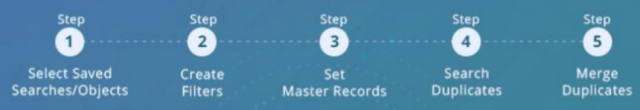

DSM makes data cleansing very easy through its simple yet powerful 5-step approach! The user is guided seamlessly through each of these steps. You can easily select the object with duplicate data and set the desired filters by choosing the right fields. You then need to select the criteria to decide the master record and search the duplicate records which are displayed. The last step of merging duplicates happens in the background and an email consisting of the links of the chosen master records is sent to the user. It is indeed pretty simple, isn’t it?

You can easily check out DSM use it to solve all your duplicate data issues!

Leave A Comment